I realize as I read news and think about it and talk about current events with others that there exists a conceptual gap between how I think about things, and how others might, particularly those with whom I disagree on process despite agreeing on desirable outcomes.

I want a peaceful political environment for my children to live in. I would like it if they could freely pursue their dreams and ambitions. I hope that our environment does not degrade, and that in particular we humans do not knowingly excessively degrade it. I hope that our economies will be sufficiently prosperous that we might not suffer hunger and cold. I hope the rule of law persists.

In all these objectives I find myself agreeing with almost everyone I meet. It is in the processes by which we might achieve these objectives that I find myself in disagreement with all but a small few (thanks Christy!). Yet I know I am right. How can this be?

Decades ago, in the late eighties, when I was in the midst of my Masters work in Electrical Engineering (more specifically, computing) there were a number of emerging studies and discoveries that informed me and changed my understanding of almost everything. Feigenbaum had discovered his constant, arguably as important in the understanding and mathematical modeling of reality as Euler’s e, and pi, in 1975. Benoit Mandelbrot had published his Fractal Geometry of Nature in 1982, so that new view of nature had already had some time to seep into at least parts of the global consciousness (the part that liked pretty pictures!). Douglas Hofstadters’ The Mind’s I and Godel, Escher, Bach: An Eternal Golden Braid were placing recursion and self-reference front and centre, to a limited audience, anyway. James Gleick had just published his Chaos in 1987, and it exploded onto the bestseller lists, so the ideas there were fresh and “out there” and in the public mind’s eye. The field of artificial intelligence was a happening thing, and with the advent of serious and low-cost computing power, a new approach to computation had emerged, that of artificial neural networks. Mimmick the structures of the brain and intelligence can be taught, was the theory.

And, most importantly of all, the Internet Protocol (IP) and the Transmission Control Protocol (TCP) had been created and released from the garden within DARPA’s walls and the Internet Engineering Task Force (IETF) formed, to allow a new type of network, with a new way of specifying its own evolution, to emerge onto the global scene. Admittedly, in the late ’80s, there still wasn’t much there and it wasn’t very interesting to anyone but us computer geeks, but because I had been spending time reading and studying about all of the above, to me, it was clear what was going to happen in the future (and, I might add now, has happened and is happening, though I don’t claim like Mr Gore that I invented the Internet), and I shouted out my own personal eureka, “The Internet will save Man from himself, and it is the only thing that can!”

It is not as though we actually need saving, as the universe at large couldn’t give a hoot, but in my own human-selfish way, I think it desirable that we stop killing and starving and torturing each other. Call me a softie. In that epiphanic instant, I saw that there was indeed a way, a process in which I now take part, in typing these words to you. I do hope you will try to follow along as I attempt to lead you to my “Aha!” In this post, I seek simply to establish that it is not only the human mind that is capable of “thought”, the only thing that is “conscious”, and the only thing capable of “intent”, “action”, and “opinion”. To more fully understand ourselves, nature, and the universe, we must expand our notions.

My son, informed by his professor of social anthropology, has pointed me to “memes” and their study, and while it gets across parts of the idea, I consider here something that exists outside and above the human mind, whereas in Dawkins’ original concept, the meme exists within it, I believe. Let’s begin with a bit of anatomy to make things clearer.

Neurons

A human neuron is a pretty simple thing, really. Here is a picture of one (from http://www.mindcreators.com/NeuronBasics.htm):

A dendritic tree accepts synaptically-weighted input from other neurons. The main function of the neuron, the decision to “fire” or not, occurs as a non-linear threshold function in the soma. The axon hooks one neuron’s output up to many other neurons’ inputs. It isn’t really all that hard to mimic the function in software or hardware (or human processes), for example here is a simple electronic implementation in only 11 transistors – the computer you are reading this with has somewhere between 1 million and a 100 million transistors, for comparison (from http://diwww.epfl.ch/lami/team/vschaik/eap/neurons.html):

A dendritic tree accepts synaptically-weighted input from other neurons. The main function of the neuron, the decision to “fire” or not, occurs as a non-linear threshold function in the soma. The axon hooks one neuron’s output up to many other neurons’ inputs. It isn’t really all that hard to mimic the function in software or hardware (or human processes), for example here is a simple electronic implementation in only 11 transistors – the computer you are reading this with has somewhere between 1 million and a 100 million transistors, for comparison (from http://diwww.epfl.ch/lami/team/vschaik/eap/neurons.html):

The points I want you to understand:

The points I want you to understand:

1) the basic building block of the human brain, the neuron, without which a human mind cannot exist, is extremely simple;

2) in input, neurons are highly connected. One neuron may receive (differently weighted!) input from tens or hundreds of thousands of other neurons;

3) similarly, at its output, a neuron may be connected to many, many other neurons;

3) every neuron is “free” to listen to a source neuron with its own weight, as it sees fit. Just because neuron A considers neuron C very important does not mean neuron B also has to;

4) (something I haven’t yet shown/proven, but hey it is true!) the entire system is distributed and “self-organized”. There is no clear “centre”, no-one is “boss”, and all connections are formed and weighted independently, through a variety of local, and not global rules. The global rules apply only to the process of neuron formation and neural interconnection, not the outcomes of which specific neuron is connected to which other, and with what weight.

Cortical “columns”

Despite the last point, above, there do exist emergent sub-structures in brains, highly-interconnected subsets of neurons that appear to compute certain sub-functions, like “friend” or “enemy” or “I am hungry”.

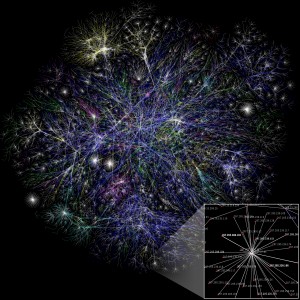

My epiphany in the ’80s was my realization as I typed a message into a Usenet newsgroup (the techno-troglodyte ancestor to this blog) that I was a neuron, the newsgroup was a cortical column (struggling with the concept of free trade between Canada and the United States, in that case), and that, at some point, the system would evolve such that something meaningful would be attached to the output, as opposed to just us geeks. Take a look at the picture below, created from the global Internet routing tables, circa 2007. Even the very, very best team of engineers, or the very best network optimization software would not be able to design this beastly network anywhere near as efficiently as the evolving self-organized free interaction of privately-owned IP routers has done for us. Make sure to click on it, zoom in and have a good look:

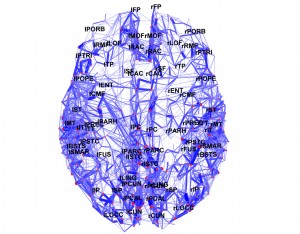

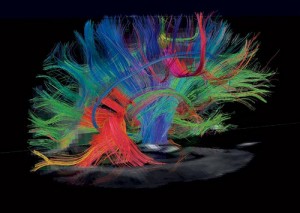

Compare that to some maps and images of the brain:

Compare that to some maps and images of the brain:

or, for that matter (pardon the pun), images you have seen from Hubble. See any similarities? They are all freely-evolved self organized complex systems, and they all exhibit similar forms of emergent order. They are all fractal, statistically self-similar, with properties that are invariant under scaling. All the things that were being said back in the 70s and 80s are true, it is just taking us a long time to realize it, and to understand the implications.

or, for that matter (pardon the pun), images you have seen from Hubble. See any similarities? They are all freely-evolved self organized complex systems, and they all exhibit similar forms of emergent order. They are all fractal, statistically self-similar, with properties that are invariant under scaling. All the things that were being said back in the 70s and 80s are true, it is just taking us a long time to realize it, and to understand the implications.

While the Internet has blossomed in many ways since that day, making it vastly more accessible to the non-technical community, we unfortunately still have not yet wired-in much of importance in terms of the actual governance of society. Blogs such as this might assist in forming independent opinion, but on whole, we have barely scratched the surface of what the Internet will evolve into (if “we” let it). But it is a good start, and I am encouraged. I’ll insert a brief plea that you help it stay as free as possible, because without being allowed freedom and diversity, it will die.

Awareness vs Self-awareness

We humans think we are pretty special, but we need frequently to remind ourselves how simple we are in the grand scheme if we are to do a decent job of meeting the objectives laid out at the beginning of this post. Intellectual arrogance cannot possibly assist us in achieving our (I assume shared!) objectives of peace and prosperity. A sea-slug with fewer than 19 neurons has survived for millennia: will we with our hundred-billion or so last longer? Smarter does not necessarily mean wiser.

Despite the above, there can be no question that human intelligence and consciousness has arisen within the structure of our brains, and that they are quite remarkable properties indeed. The self-organized collection of very many, very simple, very interconnected neurons exhibits a remarkable new property, the ability to reason. There are so many neurons in a brain that it doesn’t matter if some of them make a mistake and fire when they are not supposed to, or die off, because there are enough other neurons performing a similar (though not exactly identical) function that the error is washed out in subsequent neurons’ non-linear thresholding functions. Are there other analogous examples of this type of emergent intelligence? Yes. All around us, in fact.

Self-organization and “natural laws”

An ecosystem is just such a thing, though some would argue with the use of the word “intelligence” to describe the property of an ecosystem that allows it to persist in equilibrium (more correctly, homeostasis) in spite of a highly variable environment. If a cold winter results in fewer plants for the herbivores to eat next summer, there will be fewer herbivores that survive, and so the rate at which plants get consumed will drop. This is a gross simplification, but gets the notion of feedback, and negative feedback in particular, across. “Negative” feedback is not bad, in its control-theoretic sense, rather it is quite good, as the “negative” in the backwards (feedback) direction means that a positive change in the output will result in a corresponding negative change in an input to the system, so that stability can emerge. Positive feedback, on the other hand, is very bad indeed, as it almost always leads to instability, and runaway populations of certain species, and ecosystem death.

We have, with the best of intentions, and many times over, sought to “improve” upon nature’s balance, introducing rabbits and toads in Australia, and DDT and other pesticides everywhere, and always with unexpected results. The US Army Corps of Engineers has sought to control the Mississippi, with obvious disastrous consequences. John McPhee enumerates many of these in The Control of Nature, in which he convincingly demonstrates we can’t. Control nature any better than she controls herself, that is. Any attempt at control always produces unintended consequences in the face of complexity, in the long run.

The key to ecosystem stability is diversity. Without sufficient diversity of species, so that long chains of negative feedback loops can form, it is possible that an ecosystem will “wander off” in one particular direction, to its own detriment. The dead bacteria in a petri dish springs to mind. Those that worry about species extinction, particularly in unique ecosystems, are right to do so. Chaos and complexity theory suggest that once diversity falls below some critical threshold, an ecosystem will collapse. This is the reason I find biosphere projects so funny. I just don’t think the folks realize how complex the system would need to be to have a hope of long-term stability, as we have (we hope!) on Earth.

The functions that an ecosystem computes, akin to intelligence in a brain, are answers to the questions “what is the optimal allocation of species to allow this ecosystem to persist?” and “how should I, as a species, evolve so that I might better my odds of survival within this ecosystem?” The number of each species will rise and fall in balance to ensure the system as a whole stays stable. The species will evolve to better take advantage of niches in this particular ecosystem. We don’t know exactly why, we just know that it is true, and that it answers those particular questions very, very well.

I think about the Galapagos iguana. What were all the “decisions” that lead to the fact that these particular ones swim, eat seaweed, and spit salt out their noses, when no others do any of these things? Were the “right” decisions made? Is it “good” that we have salt-spitting iguanas, or would something else have been “better”? Here I introduce the notion of the tautological optimality of outcomes in systems created through the process of self-organization. No one can say anything would have been better. In fact, because we know that every species seeks its own survival as the natural consequence of individuals just trying to make a living, we know that the system has computed an optimal outcome, but only for the exact set of initial conditions, in this case, say, when the first non-swimming, regular iguana washed up on the Galapagos’ shores. Had things been just very slightly different, we might have had flying lobsters instead. And then they would be the optimal outcome, and we could not argue otherwise.

Free-market economies are another example of self-organized systems that compute complex functions, and produce optimal outcomes. Human activity will alter in a free-market economy to ensure that “economic gaps” are filled – that if there is something that could be done more efficiently, it will be, because there will be profit in doing it the better way. Modern economies compute problems of immense complexity, seemingly by magic. In fact, no-one knows anymore how to do the simplest things, only the economy as a whole does.

Who knows today the best way to build a pencil? Yes, trivially, we all know it is carbon surrounded by wood with a brass ferrule and some rubber in the end, but what is the best process by which to assemble the pieces? The best source of carbon? How do we find and smelt brass? How on earth does one make pink synthetic rubber? It might scare some people to think that no-one knows all the processes required to make something as simple as a pencil, because it requires the faith that we don’t need to know, the economy as a whole knows this for us, the knowledge has moved from the articulable (“use a piece of charcoal”) to the implicit, to a knowledge that is distributed, holographic. But the prize we get in return for this abandonment of knowledge and control is a decent HB instead of a lump of coal. Heck, we get ultra-smooth Uniball pens, and laptops with keyboards like this one! The participants and stuff in the economy are the neurons, and in this case a bunch of them collect in a cortical column to create one artifact, a pencil. But it is only the entire brain, the entire economy, that is conscious of how to pull the whole thing together. We have gained some control of our economic lives (and achieved great social good in the process) only by abandoning knowledge and control to the system as a whole.

I suddenly visualized the other day the entire global economy in a way much like the map of the Internet, or a brain, above. Imagine each good or service or person or company or government is a point, and each is interconnected to those it uses, and in turn to those that use it. The “synaptic weight” of each link can be a measure of value, or percent composition, say. Done? Ok, here is the interesting part: pick up the pencil. How much of the whole global economy do you think is attached to that pencil? Aside from obvious duplication (we might easily have two different smelters, both of which smelt brass) you will find that you get the whole darn thing. Seriously. If just one worker ate a banana while chopping the tree for the wood, we gotta include a banana plantation. It isn’t a strong connection, but it is there. Oh, and he was wearing jeans, leather boots, using a chainsaw… by the end, everything is connected.

In all these complex self-organized systems, something special happens at a level above that of the atoms of the system, be they neurons, or IP routers, or market participants, or plants and animals. Order develops at a higher level. A market “remembers” how to build pencils, people don’t. The higher level of the hierarchy can appear to have intent, will, and memory. This can play havoc with someone of a conspiracy-theorist mindset, as there can exist every sign of a conspiracy, but the intent is held by no one participant, it is held holographically by all, or perhaps a subset, the self-organized cortical column that effects the “conspiracy”. It matters not whether the intent is explicit at the lower level, or implicit at one level up, all that matters is that the system is behaving in a certain way.

Certainty, uncertainty, causality and prediction

Once systems reach a certain level of complexity, with lots and lots of inter-connections and negative feedback loops, it becomes almost meaningless to say things like “A caused B”. Some correlations may seem obvious, like the temperature/plant/herbivore relationship, or interest rates/money supply/GDP growth, but even these correlations usually only apply within a limited range. And we often (often!) mistake correlation for causality in such systems. I fear today we are dangerously close to dramatic instability in our (complex!) financial systems because we have failed to heed this point. We can only make the most general statements about complex systems and hope to be correct. Beware the economist who states that an x% increase in y will result in GDP growth of z. I assure you he is either lying or a fool. This is why the public, in search of (unachievable) certainty, seeks amputee economists, because, knowing that what I say is true, the pundit will always follow his definitive, precise prediction of the future with “on the other hand…”

In Summary

This is why I disagree with people on process and policy though we agree on desirable outcomes. Because I know that almost everything around me is very complex and exhibits chaotic behaviour, I know I cannot – nor can anyone – effectively predict outcomes in the big-picture, over the long-term. So I know I must not attempt to control the system, and to the extent I do try to control, I will bias the system away from an optimal balance. I try to keep my circle of concern (and attempted control) within my circle of influence (the areas where I can be reasonable sure of predictable outcomes) and I have faith that if we all did so, provably optimal results will occur. I understand the incredible order-generating power of self-organization, but many, so far do not, and I think it is simply because no-one has told them all this stuff.

The best results we can hope to achieve will result from fair and transparent processes, with freedom and a lack of coercive forces on individual participants, so that the system can exhibit its own intelligence, evolve and self-organize towards tautologically optimal outcomes for all. I want my kids to be able to swim in clean water, or fly in clean air, and eat seaweed if they want to. But I won’t tell them that they have to be a lizard or a lobster, they and the system they create for themselves will have to make that decision. Crunch!

Pingback: Money, Markets, and Trade | Bacq2Bacq: Ben and Christy's blog

Pingback: Gravity Always Wins | Bacq2Bacq: Ben and Christy's blog